Autodesk - Human Robot Collaboration.

Universal Robots help Autodesk push construction industry’s boundaries for human-robot collaboration.

01 THE SHORT STORY

Autodesk has chosen UR robots for a wide range of projects due to the robots’ safety features - enabling more “daring” research - and the open architecture of the cobots that facilitates easy command streaming and quick iterations.

02 THE CHALLENGE

Autodesk makes software commonly used in the construction industry and wants thorough research to create new solutions enabling its customers to work closely together with robots to accomplish new tasks. While the manufacturing supply chain allows for much smaller product tolerances, the difference in parts handled in construction are typically much broader, creating challenges for automation solutions’ repeatability and handling performance. Having the flexibility of moving a robot around a rugged construction site, deploying it in different tasks is also difficult with traditional industrial robots that usually stay in safety cages dedicated to one task only.

03 THE SOLUTION & THE HIVE PAVILION – HUMAN-ROBOT INTERACTION

Autodesk’s Robotics Lab is using Universal Robots’ collaborative robot arms (cobots) to address these challenges in research projects spanning human-robot interactions, machine learning, drawing and smart assembly systems. Four recent projects include:

The HIVE Pavilion – Human-Robot interaction

The HIVE was a pavilion built out of raw bamboo and fiber string in close collaboration between conference attendees at Autodesk University, ICD University of Stuttgart, Autodesk Robotics Lab, and UR robots. The goal was for users to experience a seamless integration between robotic manufacturing, wearables, RFID tracking, and intelligence embedded in the building pieces. It was a formidable challenge. Raw bamboo is a very uneven, bendable material with different lengths and widths. “When we started, we weren’t really sure to what extent we could work with our robot and help it understand the uncertainty and the variability that we were giving it,” explains Heather Kerrick, Senior Research Engineer at Autodesk’s Robotics Lab. “We were really proud of our ability to empower the robot by giving it sensors and decision-making abilities and then act on that accordingly.”

The Hive Pavilion was built at “winding stations” where attendees fastened three random pieces of bamboo onto a Universal Robot that generated the necessary movement sequence to hook fiber on the tips of the bamboo to create a unique, tumble weed-looking tensegrity element. “The UR robots were able to offer very precise movements and very precise measurements that would have been difficult for a human to do on-site, so the human didn’t need anywhere near as many measuring tools or equipment,” says Kerrick, while also emphasizing the safety aspect. “We’re doing experimental research where the robots are moving based on real-time sensor data, so the chance of the robot doing something unexpected is really high,” explains Kerrick, adding that had her team used a larger, more industrial robot, they wouldn’t have been able to engage with the public in the same way and it would have been a much slower research project. “But with the Universal Robots, we were able to be a little more daring with our research because we could trust that the robot wouldn’t break itself, and wouldn’t pose a danger to others.” The Autodesk team successfully built the HIVE in three days.

04 THE SOLUTION & VECTOR ARTWORK DRAWING – ROBOTIC PATH FOLLOWING

Being able to operate in an open space without safety guarding also landed the UR10 robot a cameo in “Artoo in Love” a viral short film created by Evan Atherton, a research engineer at Autodesk. The film features Universal Robots’ UR10 model portrait drawing in a park. “Taking a robot to this unknown place was an interesting challenge,” explains Atherton. Together with colleagues, he calibrated the robot and wrote a simple program that directed the robot to follow the paths of a vector drawing that was projected onto a canvas. “The UR10 was perfect; it was small, mobile and safe. We could bring it out in a Pelican case. Had we used one of our traditional robots, it would have required a forklift and a safety cage so that would never have worked,” he says.

05 THE SOLUTION & FLEXIBLE ROBOT ASSISTANCE ON CONSTRUCTION SITES

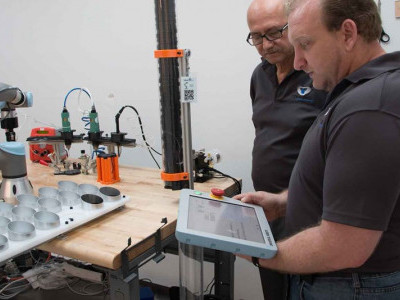

The UR robots’ built-in safety features also prompted Autodesk to develop a prototype of a “robot assistant” for construction sites that can be wheeled around construction sites. The research team put a router on the end of the robot arm, gave it a camera and a projector and developed machine learning software that enabled the robot to recognize human gestures and voice commands. The UR10 can be rolled up to a piece of drywall, for instance, and project an outlet onto the wall that the user can modify and then use voice command to tell the UR10 to go ahead and cut it out.

06 SMART ASSEMBLY SYSTEMS

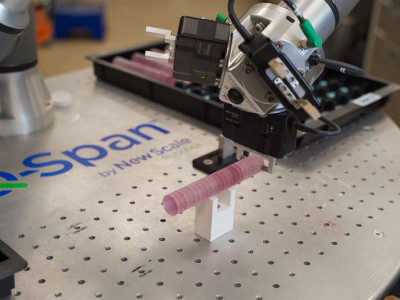

Another construction industry challenge now addressed in Autodesk’s research with the UR robots is developing a smart assembly system with the team’s “Brick-Bot” tackling three sub-problems: bin-picking, re-grasping and placement. Using vision guidance, the robot can pick out a pre-defined brick in a jumble of different sizes and colors. If the brick is grasped in the wrong position for placement, the UR10 performs a visual survey and can re-position and re-grasp the brick until it is correctly placed in the gripper. The final placement is also vision-guided by a second UR robot, a UR5, holding a camera to check the brick assembly. “The next iteration is to actually start assembling designs, for example a house out of Legos or a toy giraffe, and then have the robot automatically build it,” explains Yotto Koga, Software Architect at Autodesk, emphasizing how the ability to work right next to the robot in this process is imperative. “One of the major reasons we chose Universal Robots is because it’s safe to work around. I could literally connect the robot to my laptop, work next to it, and quickly iterate through our experiments without worrying about safety protocols slowing things down. This was very important for us to make progress in this project.”

Quick progress was also facilitated by the UR robots’ open APIs. “We were able to get pretty low-level control of the UR robots using the streaming API over TCP communication, which was vital to our particular needs as we needed to directly access the by robot bypassing the robot’s own operating system,” explains the software architect. His colleague Heather Kerrick recounts how the HIVE project benefited from the robot’s open architecture as well. “Building the HIVE meant working in a bunch of different coding languages and environments across teams and devices. We were able to simplify all of our commands into a single string that we could send to the robot,” she says. With our larger industrial robots, there’s often extra steps or extra software that’s required in order to sidestep whatever native controls are built into the robot, which is not the case here. The scripting language for the UR is also very, very simple to learn and to use.”