Deep Learning Algorithms for Vision Systems

Which deep learning neural networks are best suited for industrial vision systems and why?

Human vision results from millions of years of evolution, starting from organisms gaining mutations that respond to light. The complex system is one of the most advanced vision systems among all living organisms. Human vision is controlled by the region in the brain called the occipital lobe. Though we still do not know the complete mechanism of human vision, it is an incredibly complex task. It becomes evident when we try to make computers see.

Need for Deep Learning

Images are made of thousands of pixels. Each pixel is simply a small, single-color square. The color of a pixel is represented as a set of numerical values corresponding to the amount of red, green, and blue (RGB) colors in the image. For example, the image color for figure 1 is [198 226 255] in the RGB scheme.

Figure 1. A block of color with an [198 226 255] pixel color.

The computer understands an image as a set of RGB values corresponding to each pixel square in the image. The unique RGB colors used on Control Automation’s website (figure 2) are the following (obtained via a Python script).

Figure 2. Control Automation’s logo.

[[ 0 0 0]

[ 49 12 12]

[197 48 48]

[197 48 49]

[197 49 48]

[197 49 49]

[198 48 48]

[198 48 49]

[198 49 48]

[198 49 49]

[199 48 48]

[199 48 49]

[199 49 48]

[199 49 49]

[199 49 50]

[199 50 49]

[199 50 50]

[200 48 49]

[200 49 48]

[200 49 49]

[200 49 50]

[200 50 49]

[200 50 50]

[201 49 49]

[201 49 50]

[201 50 49]

[201 50 50]

[201 50 51]

[201 51 50]

[201 51 51]

[202 49 49]

[202 49 50]

[202 50 49]

[202 50 50]

[202 50 51]

[202 51 50]

[202 51 51]

[203 50 50]

[203 50 51]

[203 51 50]

[203 51 51]

[204 50 50]

[204 50 51]

[204 51 50]

[204 51 51]

[204 51 52]

[204 52 51]

[204 52 52]

[205 51 51]

[205 51 52]

[205 52 51]

[205 52 52]

[206 51 51]

[206 51 52]

[206 52 51]

[206 52 52]

[206 52 53]

[206 53 52]

[206 53 53]

[207 52 52]

[207 52 53]

[207 53 52]

[207 53 53]

[208 52 52]

[208 52 53]

[208 53 52]

[208 53 53]

[208 53 54]

[208 54 53]

[208 54 54]

[209 52 53]

[209 53 52]

[209 53 53]

[209 53 54]

[209 54 53]

[209 54 54]

[210 53 53]

[210 53 54]

[210 54 53]

[210 54 54]

[210 54 55]

[210 55 54]

[210 55 55]

[211 53 53]

[211 53 54]

[211 54 53]

[211 54 54]

[211 54 55]

[211 55 54]

[211 55 55]

[212 54 54]

[212 54 55]

[212 55 54]

[212 55 55]

[213 54 54]

[213 54 55]

[213 55 54]

[213 55 55]

[213 55 56]

[213 56 55]

[213 56 56]

[214 55 55]

[214 55 56]

[214 56 55]

[214 56 56]

[215 55 55]

[215 55 56]

[215 56 55]

[215 56 56]

[215 56 57]

[215 57 56]

[215 57 57]

[216 56 56]

[216 56 57]

[216 57 56]

[216 57 57]

[217 56 56]

[217 56 57]

[217 57 56]

[217 57 57]

[217 57 58]

[217 58 57]

[217 58 58]

[218 56 56]

[218 56 57]

[218 57 56]

[218 57 57]

[218 57 58]

[218 58 57]

[218 58 58]

[219 57 57]

[219 57 58]

[219 57 59]

[219 58 57]

[219 58 58]

[219 58 59]

[219 58 60]

[219 59 58]

[220 57 57]

[220 57 58]

[220 57 59]

[220 57 60]

[220 58 57]

[220 58 58]

[220 58 59]

[220 58 60]

[220 59 58]

[220 59 59]

[220 59 60]

[220 60 59]

[221 57 59]

[221 57 60]

[221 58 58]

[221 58 59]

[221 58 60]

[221 59 58]

[221 59 59]

[221 59 60]

[221 60 58]

[221 60 59]

[222 58 58]

[222 58 59]

[222 59 58]

[222 59 59]

[222 59 60]

[222 60 59]

[222 60 60]

[223 58 59]

[223 59 58]

[223 59 59]

[223 59 60]

[223 60 59]

[223 60 60]

[224 59 59]

[224 59 60]

[224 60 59]

[224 60 60]

[224 60 61]

[224 61 60]

[224 61 61]

[225 59 60]

[225 60 59]

[225 60 60]

[225 60 61]

[225 61 60]

[225 61 61]

[226 60 60]

[226 60 61]

[226 61 60]

[226 61 61]

[226 61 62]

[226 62 61]

[226 62 62]

[227 60 60]

[227 60 61]

[227 61 60]

[227 61 61]

[227 61 62]

[227 62 61]

[227 62 62]

[228 61 61]

[228 61 62]

[228 62 61]

[228 62 62]

[229 61 61]

[229 61 62]

[229 62 61]

[229 62 62]]

The number of times each of those colors appears in the image is as follows.

[ 1368 16 39287 434 405 190 9274 2239 1045 1028 145 498

537 3662 127 65 11 3 97 3877 554 965 387 379

788 1146 3682 20 17 2 12 30 76 3570 532 540

260 1115 807 839 9476 15 252 107 3496 574 250 90

2658 589 460 553 83 214 204 4593 220 199 40 2830

675 1046 4206 147 347 363 1005 43 32 4 10 11

2228 392 741 210 739 755 734 2145 8 5 1 21

105 1557 3787 454 139 5369 295 337 321 785 27 74

96 3845 232 230 67 1640 8482 1049 971 187 354 290

5721 54 57 10 1236 321 305 252 280 614 1152 4126

225 28 1 3 68 56 4220 390 446 200 694 834

2 884 1987 46 2 1 39 2193 218 8 195 5844

451 5 432 145 1 1 3 4 1887 1005 6 701

1353 2 5 2 112 1430 343 9064 147 135 25 2

2 3175 795 985 699 253 2042 617 2680 37 29 7

11 20 5327 785 945 1610 845 1438 1059 3187 2 1

1 31 134 124 7809 767 8255 284 1621 1139 1129 1400

66 208 217 1774]

This is all a computer knows about the image. It does not understand the words in the image are “CONTROL” and “AUTOMATION.” The computer also does not understand “O” in the image is shaped to resemble gear teeth. Computers cannot understand what a logo is. For that, machine learning and deep learning are required.

Note: The image used for the python script was a .webp file with no background. This is the reason for the absence of white RGB ([256 256 256]) in the result. But the white background is visible with the image on this page is the color of the webpage. There is no white color in the actual image.

Convolutional Neural Network (CNN)

The first step for vision systems is understanding objects in an image. To do so, convolutional neural networks (CNN) are required. Using deep learning with artificial neural networks (ANN) for image recognition is highly resource-intensive. CNN is a better solution for image recognition.

Take, for example, a computer wanting to identify “T” in the logo of this website. We know that T is made by a horizontal line perched atop a vertical line. We can use filters with neural networks to identify the contents of an image. To identify the letter T, we need three filters.

- A horizontal line

- A vertical line

- The horizontal line is atop the vertical line

CNN performs a convolutional operation to identify these three filters. The CNN algorithm takes a grid of pixels and contrasts them with each filter to create a feature map. This feature map can identify whether a particular filter is present in the image.

If all three filters are present, the letter T is identified. Similarly, a well-trained CNN can identify all letters from images. Scaled-up CNNs can also be trained to detect various objects.

CNNs require fewer resources compared to simple ANNs to accomplish the same task. In simple words, CNN creates a shortcut to ANN methodology. Using CNN, computers can understand objects in an image. Applying CNNs on the logo of this website computer can:

- Identify the letters in the logo of this website.

- Understand that it is made up of two separate words.

- The two words are CONTROL and AUTOMATION

- The first O in the logo is shaped like a gear, etc.

Recurrent Neural Network (RNN)

Vision systems are not solely based on identifying images. They should also understand what is happening in a video clip or a video stream. Luckily, videos are simply a lot of pictures in sequence. But, identifying the objects in each image is not sufficient to interpret a video.

Figure 3. An adjustable guard with an open/close function so that operators can provide maintenance. Image used courtesy of OSHA

A video with a machine guard moving up and down comprises several images. The position of the guard changes in every image. Identifying the guard in every image is insufficient.

When all images are analyzed, the position of the guard changes from image to image. We can conclude the guard is moving. The correct image sequence and position changes helps identify the guard is moving from the top to the bottom.

CNNs, however, can only identify objects in each image. A recurrent neural network (RNN) is needed to string the insights from all the images and identify the video’s context. CNNs can only identify spatial data, which helps identify objects in the data. RNN can work with temporal data—data with a time component.

RNNs are used in datasets where sequences are important. CNN analyzes each of the video frames. The output from CNN is fed to another model with RNN to handle the temporal information attached with videos.

The RNN utilized the CNN’s output. In the context of vision systems, RNN handles video data, but most use cases for RNN are in natural language processing (NLP) applications.

Mimicking human vision is one of the most challenging tasks AI systems can undertake. It is an immense and complex procedure to identify each pixel in the image, recognize objects, and string it together with the context from the rest of the images in the video stream. CNN can recognize objects in each video frame, while RNN can bring together the video’s context.

As with every machine learning model, CNNs and RNNs have to be trained with data to be usable. In most applications, the amount of data required to train these deep learning models is humongous, which is why advanced vision systems are not yet a reality.

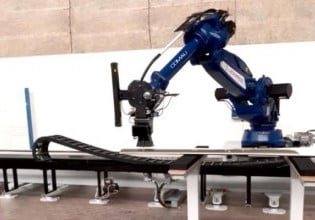

Featured image used courtesy of TELEDYNE FLIR